- 28 February 2017

- Imagination Technologies

The title of this post uses the word ‘revelation’ instead of ‘revolution’ because mobile game engines are only inching their way toward using full-on ray tracing. The mobile game community wants ray tracing but the roadblock has been the lack of hardware fast enough to keep up with the challenging frame rates. This is where Imagination’s optimised for mobile, PowerVR Wizard ray tracing hardware IP comes into the picture.

This post has two parts written from different points of view. The first part discusses issues related to the artist and the production of game scene assets: geometry, colour, texture, surface properties and light sources. The second part describes the software engineering project to integrate the Unreal Engine with Imagination’s PowerVR GR6500 Wizard development hardware, and their Vulkan driver with their proposed ray tracing API extensions, in order to demonstrate ray tracing functionality running in a well-known game engine.

Part1: Art (game artist lament: “I just want to make it pretty, what’s all this then?”)

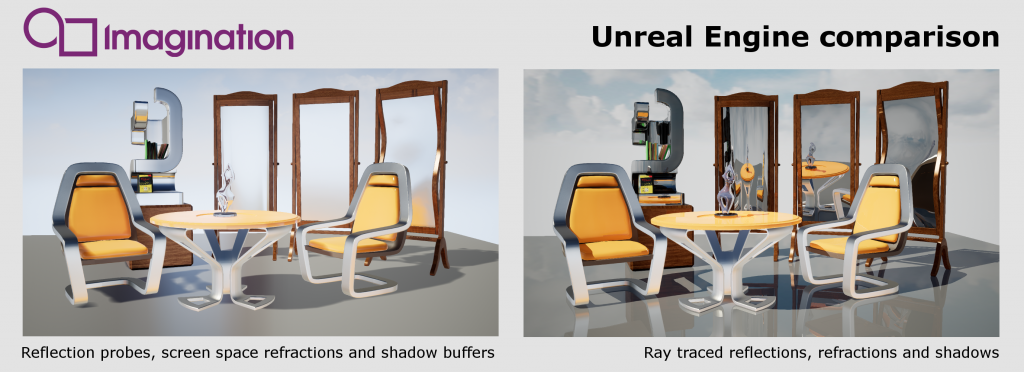

There is no doubt that game production artists want ray tracing (a one-button solution for accurate reflections, refractions and shadow casting). It would free up much of their time to concentrate on aesthetics rather than massaging their content to accommodate the trickery that can make scenes look more realistic.

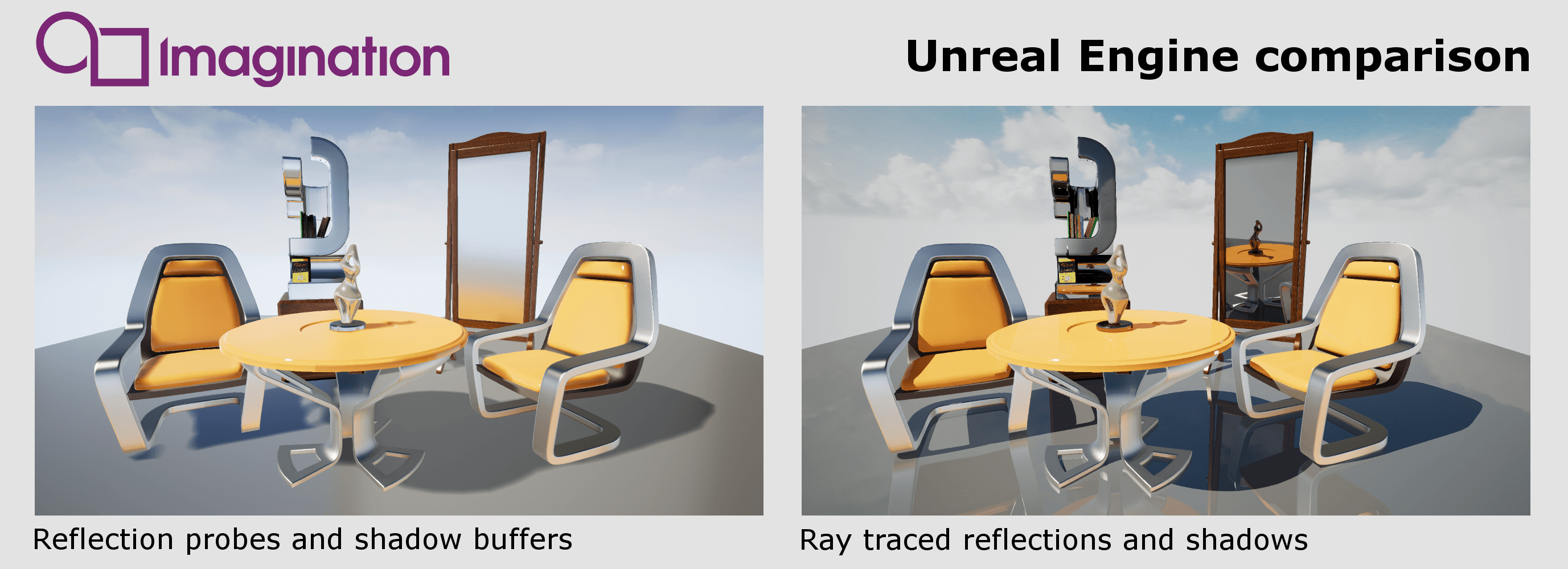

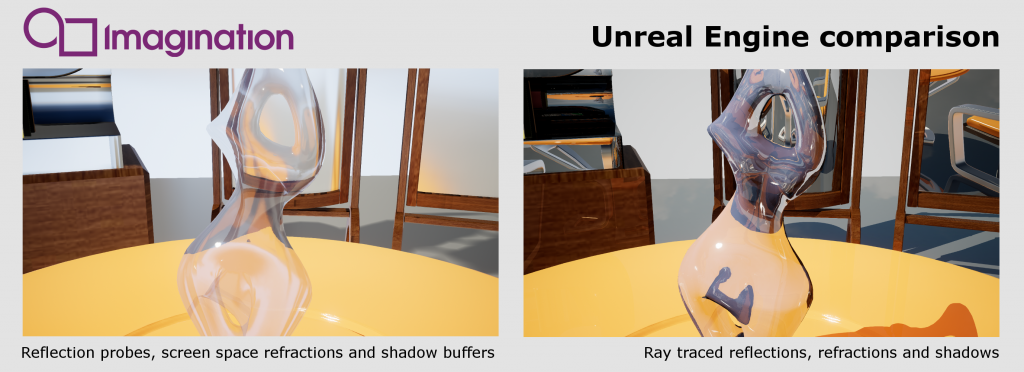

It’s important to note that although ray tracing workarounds like reflection probes, screen space and planar reflections add a tremendous dazzle, they have obvious optical limitations. With screen space, reflections of objects that are not visible on-screen disappear. The back of an object is also not reflected. Planar reflections are accomplished in a brute force manner by re-rendering the scene from a cleverly repositioned camera. However, they only work on flat surfaces and in fact are usually disabled on mobile platforms due to performance limitations. Production artists spend much of their time carefully positioning and testing reflection probe nodes and other light emitting nodes to make the scene more visually striking. Of course, if the game is intended for a mobile device you have to limit the number of reflection probes because they need to be re-generated every frame to include any animation. Some of these admittedly ingenious workarounds require hundreds of hours of pre-processing or baking in order to accomplish the intended results. All this, of course, needs people to watch over the process and confirm that the precooked assets work as intended.

It is also verifiable that ray traced shadows more accurate and require less intensive processing, which results in lower power consumption as well as improved performance. Currently, setting up a non ray traced shadow buffer is a finicky and often glitch-prone process. Essentially, they are depth image files representing the distance from a light’s point of view. They usually do not encompass a large area of a game’s scene due to practical limitations of file sizes. They are precooked for static objects but for animating objects they have to be re-cooked every frame, which can be quite processor intensive. Also, transparent objects can be problematic particularly if the shadow has to inherit colour properties. Proper distance blurred shadows add a level of realism but are not possible to create without ray tracing or on-the-fly depth measurements. All this goes away when the rendering engine uses full-on ray tracing for shadows. (Note that at the time this post was written we have not yet added our hybrid single-ray soft shadow algorithm to the Unreal Engine support.) In order to significantly improve shadow-casting performance, Imagination’s ray tracing implementation treats shadow rays in a special way, particularly for opaque objects.

The rest of the scene prep pipeline, texturing, modelling, lighting and surface look development would only be streamlined by a ray tracing capable engine. The game artist community is anxiously waiting for mobile hardware that can accommodate ray tracing. Even with ray tracing features, there is still a lot of work to be done but more time could be spent on making the game pretty.

Part2: Science (and now the real story)

The goal of this project was to make sure the ray tracing effects were integrated as first class citizens alongside the existing raster effects. For example, when material surface properties require reflection, a variant of the material shader is compiled to cast the appropriate reflection rays. We took great pains to respect the architecture of Unreal Engine so that all the effects would play nicely together. This means your game can opt to use ray traced reflections with shadow mapped shadows in the same scene at the same time. To make it all work, the engine compiles ray tracing variants of the material shaders that run when secondary rays intersect the object in the scene.

Unreal Engine 4 has supported desktop Linux with OpenGL 3.x and 4.x for some time. Late in 2015, initial support was also added for Vulkan 1.0, on Windows and Android only, with Epic’s official announcement at MWC in February 2016 with the ProtoStar demo on the Samsung Galaxy S7. Work to make the engine able to run with Vulkan on desktop Linux was done by Yaakuro from the Unreal Engine 4 Github community around the same time, who demonstrated it running on an nVidia desktop GPU.

In May 2016, we embarked on a project to get Unreal Engine 4 running on our PowerVR GR6500 evaluation system, initially in regular raster mode, but with a view to adding support for our ray-tracing functionality at a later date. Due to the structure of the Unreal Engine 4 code-base, which by default supports OpenGL ES 2.x and 3.x only on mobile platforms, and only desktop OpenGL 4.x on Linux, we also decided to jump straight to Vulkan as a more platform-agnostic API to give an easier path to supporting our “mobile” GPU on a desktop Linux host computer.

It only took a couple of weeks of effort to resurrect Yaakuro’s work and bring it up to date with the current Unreal Engine 4 code-base, to get a baseline of the engine running using the Vulkan API on desktop Linux using an nVidia GPU. From there, it was very straightforward to change the Vulkan device management to instead use the GR6500 card using Imagination Technologies’ own Vulkan driver implementation, requiring only minor cleverness with the SDL library to fool the engine into thinking it was still rendering into an X window, while actually rendering on the evaluation system’s HDMI output.

There was then a pause in the project as we became more familiar with the Unreal Engine 4 code-base and worked steadily to complete and stabilise the Vulkan driver in conjunction with our engineers in the UK. At the same time, we started planning the addition of basic ray tracing functionality to the engine using the Vulkan ray tracing extension.

The initial stages involved adapting the engine’s render pass mechanism to perform a ray tracing scene build operation. The Vulkan ray tracing extension API makes this very easy, as the code flow required is very similar to an existing Vulkan raster render pass, requiring only wrapping the render sequence in with begin/end commands, and the use of a different Vulkan pipeline object with vertex and ray shaders instead of the regular raster shaders. We were able to reuse the existing “static mesh” geometry draw loop from the engine code, adapting it only to remove the frustum culling visibility checks, as we desired all geometry to be rendered into the ray tracing scene hierarchy.

We were able to use exactly the same vertex shader (generated and compiled by the engine) as used for raster rendering, merely changing the uniform values that control the vertex transforms in order that the vertex output is in world space instead of projected screen space. Initially, we used a hand-written ray shader with uniforms and other bindings controlled by our own code, with the only requirement being that the vertex attribute structure had to match that of the vertex shader (this was achieved by simply parsing the GLSL source for the vertex shader prior to letting the engine compile it).

This gave us a one-shot scene build and a ray tracing render with the hard-wired ray shader, to which we added basic colour, lighting, shadowing, and reflections. The final step was then a presentation pass by which the final ray tracing image could be drawn over the top of the regular raster image in the game viewport.

The next step was to enable the engine to generate ray shaders itself from the actual scene materials. The material and shader cross-compiler mechanism was adapted to add the extra shader type, although the upper levels of the engine simply regarded it as a second pixel (fragment) shader, derived from an adapted version of the USF shader file that defines the regular pixel shader. The Vulkan RHI layer then converts this second USF pixel shader to GLSL and then transforms that GLSL source code into a ray shader prior to further compiling it to SPIR-V. The material code in the higher levels of the engine then simply creates a second copy of the pixel shader uniforms (since the parameters are the same) and passes them down to the RHI to what it believes is a second pixel shader but which is actually the ray shader. Similarly, it binds exactly the same textures and samplers as are bound to the pixel shader.

This resulted in a ray tracing render, which, by default, duplicated the regular pixel shader behaviour almost exactly, including mapped/CSM shadows, reflection probe reflections, and all the same lighting variants (light map, directional light, optional point lights), while still being a full ray-traced render.

The specifics of the ray tracing itself (the management of input and output rays, and the other ray-tracing specific bindings to the ray shader) were hidden by the use of custom USF intrinsic functions defined by adapting the engine’s HLSL parser. This enabled the USF shader code to contain functions for checking incoming ray depth (for reflection culling), emitting reflection and shadow rays and accumulating a final colour to the ray tracing accumulation buffer, without using illegal syntax. Those function calls get converted verbatim to GLSL, and the implementation of those functions is added to the GLSL code as part of the conversion of the code from pixel shader to ray shader.

With some pre-processor improvisation, this enabled the USF “mobile base pass” pixel shader code to be used to also create basic ray shaders with barely 10 lines of code added or adapted, to get ray traced reflections and image accumulation. Some deeper restructuring of that USF code was required to add ray traced shadows, but only to move the lighting computation to before the shadow computation, so that it was possible to do a run-time switch to choose either raster (map/CSM lookup) or ray traced (deferred accumulation) shadows.

With some pre-processor improvisation, this enabled the USF “mobile base pass” pixel shader code to be used to also create basic ray shaders with barely 10 lines of code added or adapted, to get ray traced reflections and image accumulation. Some deeper restructuring of that USF code was required to add ray traced shadows, but only to move the lighting computation to before the shadow computation, so that it was possible to do a run-time switch to choose either raster (map/CSM lookup) or ray traced (deferred accumulation) shadows.

The demos that are showing at our GDC 17 booth this year is an expanded version of one of the regular engine tutorial scenes. The engine can be switched between raster mode and full ray traced mode on the fly. While in ray traced mode, one can then select between raster and ray traced shadows and probed and ray traced reflections, again on the fly. We have also added some extra material parameters to enable the selection of render mode on a per-material basis. For example, since this initial ray tracing reflections implementation does not yet support glossy (blurred) reflections, we show how some materials can continue to use blurred probe reflections, while others use ray tracing to get true sharp reflections.

Finally, we demonstrate the flexibility of ray tracing with respect to alternative camera models with a fourth render mode, which renders the scene using a true 360-degree spherical lens in a single render.

Future work will include dynamic geometry, support for point lights, and a reimplementation of our hybrid single-ray soft shadow algorithm.

To get a look at the demo first-hand visit the Imagination Technologies booth (#532) at the GDC17 Expo.

Post written by Will Anielewicz, Senior Software Design Engineer, PowerVR, Imagination and Simon Eves, Senior Software Design Engineer, PowerVR, Imagination

Will Anielewicz:

Will has been playing with computers since 1967. After completing an Honors Computer Science degree at York University in 1974, he was a multi-discipline Masters candidate combining Fine Arts, Computer Science and Philosophy. The mandate was to create a computer graphics piano that could be used in live concert performances. In 1976 Will had one of the world’s first exhibitions of computer graphic art, at a Toronto Canada art gallery. In 1982 Will was hired as the first employee of Alias Research the creator of Maya. Since then, Will has worked on 14 feature films (such as “Phantom Menace”) and several award-winning commercials. Will currently works for Imagination Technologies in the PowerVR ray tracing division as a senior software engineer. His goal is to continue to push the envelope of computer-generated imagery particularly related to computer vision and augmented reality.

Simon Eves:

Simon has been a senior graphics engineer at Imagination Technologies in San Francisco for six years, after fifteen years as a software engineer and technical artist for visual effects for movies and TV, in both London (Cinesite) and the Bay Area (Industrial Light & Magic, among others). He was the first hire by Imagination San Francisco after its acquisition of Caustic Graphics, the original start-up that developed the hardware ray tracing architecture that became PowerVR Wizard.