- 23 March 2018

- Benjamin Anuworakarn

If you’re developing for Unity on mobile, this is a blog post you can’t afford to miss! Recently we gave you some PowerVR performance tips for Unreal Engine 4. However, if Unity is more your thing, we’re now going to share with you the Unity performance-boosting tips from our very useful PowerVR Performance Recommendations document.

Most optimisations will apply to all mobile platforms but there are a few PowerVR specific ones that we’ll mark as such. That said, it is generally good practice to apply these suggestions regardless of your target platform as you should be getting performance benefits in almost all cases.

Build Settings

Texture Compression

First off, always make sure you use texture compression. This not only saves space but also saves bandwidth at runtime. It’s one of the best ways to increase performance and save battery life. The advantage of compressed textures is that they will stay compressed until the very moment they are needed to process a fragment.

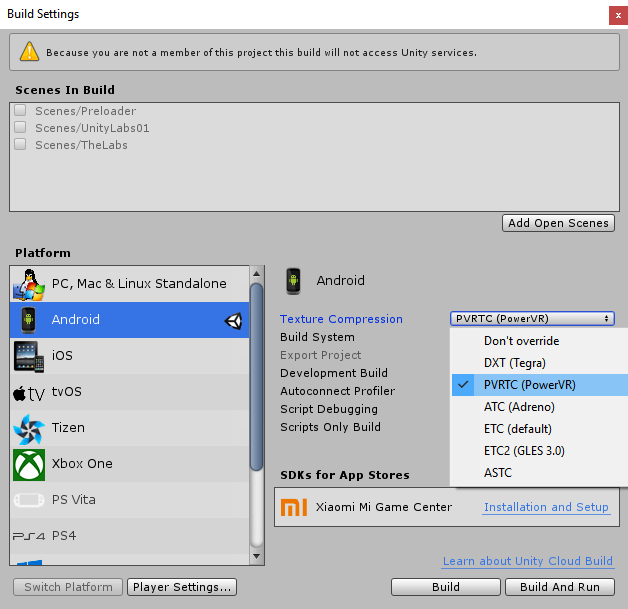

Unity supports a variety of texture compression methods. By default Unity selects ETC, however, you can override it as in the screenshot below:

The compression format options are as follows:

- ETC is a texture compression format supported on all devices. It is superseded by ETC2 in terms of quality and size. While ETC is simple and has widespread support, it doesn’t support alpha channel and the compression rate is not that great.

- PVRTC is a texture compression format supported exclusively by PowerVR hardware. It supports alpha channel, has one of the best size-to-quality ratios and is highly configurable to match your quality/size needs.

- ASTC is an open format supported by most platforms. It supports alpha channel, has comparable compression rate to PVRTC and similar configurability.

- DXT is a compression format supported widely on desktop. On mobile, due to licencing issues, it is only supported by the Nvidia Tegra devices.

- ATC is a texture compression format supported only on Qualcomm Adreno devices.

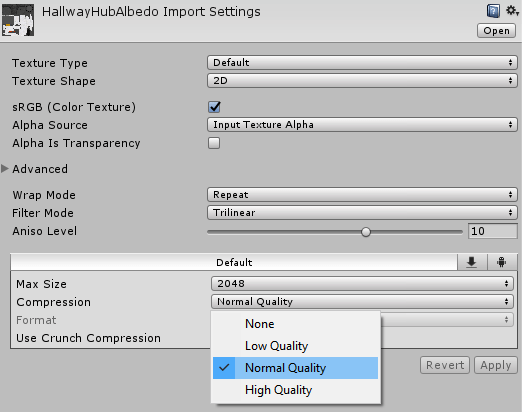

While texture format setting is global, texture compression quality can be adjusted per texture as in the screenshot below:

Quality Settings

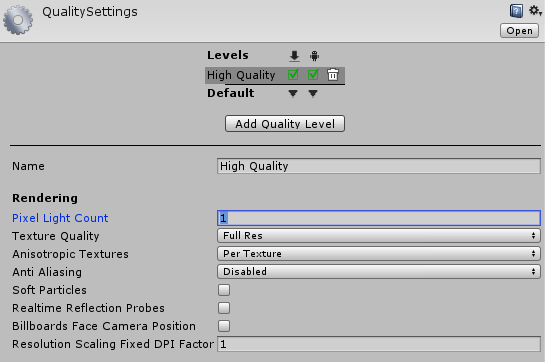

One of the more general optimisations is to lower the number of lights allowed per pixel. The number of lights allowed will directly affect the GPU workload, as each object in the scene has to be rendered every time a light touches it. This means one pass per light for each mesh, capped by the maximum number of lights affecting a mesh set in the Pixel Light Count option. If the number of lights affecting a mesh exceeds the cap, only the most important lights are rendered for each mesh. You might be able to increase the total number of lights active at any given time if the lights are evenly distributed and don’t overlap.

To change the number of lights allowed per pixel see the screenshot below:

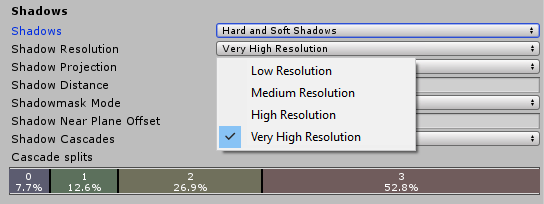

Make sure shadow map resolution is ‘just right’, use the lowest setting that still looks good enough. If the shadow map resolution is too high, it not only wastes memory and bandwidth but also hurts cache efficiency.

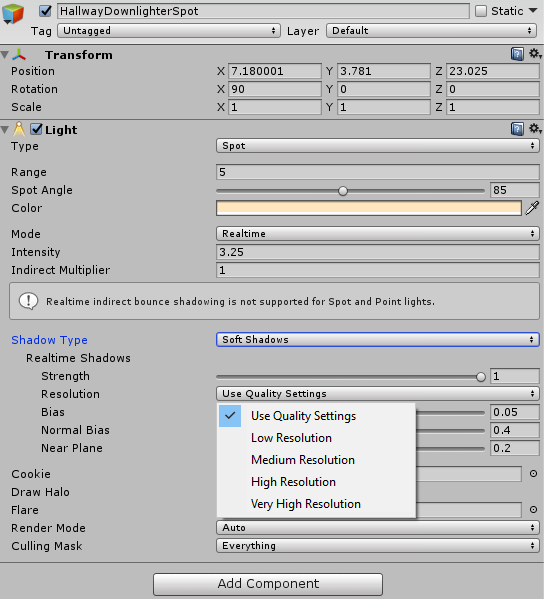

Shadow resolution can also be set per light as in the screenshot below. Individual light shadow resolution always overrides the global Quality settings, unless “Use Quality Settings” is chosen.

Shadow resolution can also be set per light as in the screenshot below. Individual light shadow resolution always overrides the global Quality settings, unless “Use Quality Settings” is chosen.

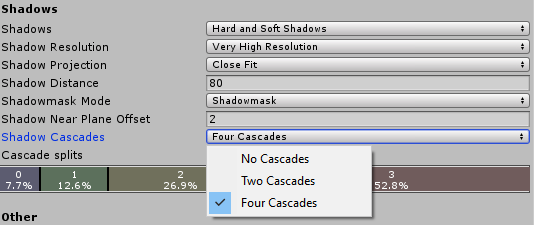

It might also be a good idea to adjust the number of shadow cascades for your directional light if the camera angle allows for it and if the cascades don’t have to cover a lot of areas. In this situation, you can lower the number of cascades from 4 to 2 – or 1 for no cascades.

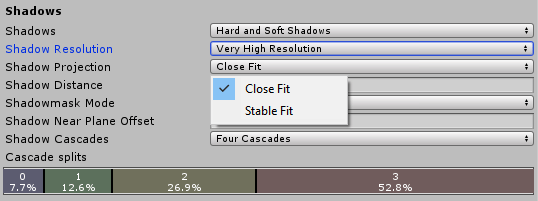

It is also possible to increase the effective shadow map resolution by changing the shadow cascade fit to ‘close’ from ‘stable’. This results in an increase in effective shadow map resolution, at the cost of some temporal flickering.

- Close fit means that the shadow rendering algorithm will try to use the allocated shadow map resolution as efficiently as possible. This will result in higher quality shadows. However, this also results in some temporal flickering as the camera or the light moves. This flickering might be hidden using the Soft Shadows option.

- Stable fit means that the shadow rendering will try to stabilise the shadow edges as much as possible. This means that the shadow will not flicker as the camera or the light is moving. It also results in lower quality shadows.

The trade-off between close and stable fits needs to be considered carefully. The close fit needs lower resolution shadows to look high quality, but it also needs more filtering (soft shadows) to hide flickering. On the other hand, stable fit needs higher resolution shadows to look high quality, but it can get away with less filtering as it doesn’t need to hide flickering.

Mesh Settings

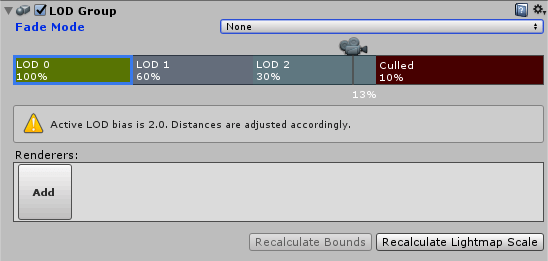

Level of Detail (LOD) groups are a great tool to manage geometry complexity. You can use them to swap out detailed geometry to less detailed as the camera moves further away from the given object. This way the amount of geometry on the screen is never more than necessary, and quality is still sufficient.

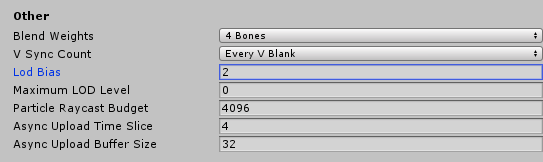

Note that LOD Groups are a great way to instantly optimise geometry content for mobile. Set a higher LOD bias so that lower resolution meshes are used by default.

The screenshot below shows how to set the LOD bias in the Quality settings:

Note that reducing the geometry workload helps to reduce the amount of computation needed, resulting in potentially cooler devices and longer battery life.

Graphics Settings

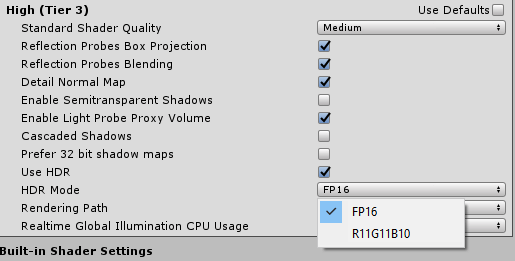

If you require HDR rendering, it might be better to use FP16 instead of RG11B10, as it gives better precision and quality.

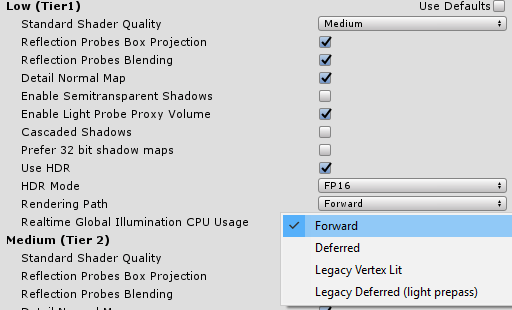

Deferred vs Forward rendering

Unity provides the option to choose between deferred and forward rendering for lights. In the scenario of using many overlapping light sources in a scene, deferred rendering usually provides better performance than forward. However, at the moment on mobile forward rendering provides better performance, as the advanced capabilities such as Pixel Local Storage (PLS) that accelerate deferred rendering on mobile are not currently supported by Unity. For a low number of lights, forward rendering has better performance than deferred as it comes with less overhead. The screenshot below shows how to choose between deferred and forward.

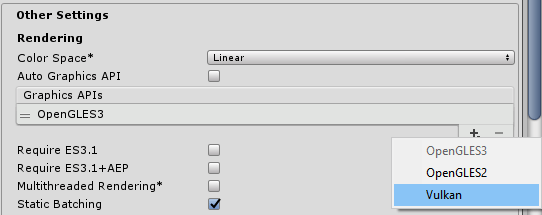

Player Settings

The latest Android devices (including PowerVR) can utilise the Vulkan API. Vulkan is great as it gives you the opportunity to reduce the CPU load and gives you more control over synchronisation. It’s also great to utilise multi-core CPUs as it allows for multi-threaded command submission to the GPU.

When selecting APIs to use, Unity will try to use the most advanced one. However, if a device doesn’t support your selected API, Unity will try to fall back to an API with wider support.

If you choose to stick with OpenGL it could be a good idea to use multi-threaded rendering. This option will make sure rendering and other work run in separate threads, meaning work will be better distributed across multiple cores. This will not be as good as Vulkan’s multithreaded command submission feature, but it will still boost your CPU performance substantially.

Shaders

Use alpha blending for transparent surfaces instead of using alpha testing/clip() in the shaders. Note that alpha testing hurts all architectures using early depth test equally. On PowerVR, opaque primitives perform depth writes before the fragment processing pipeline stage. This enables PowerVR devices to have zero overdraw for opaque primitives, saving enormous computation time and bandwidth.

However, with PowerVR alpha tested/discard primitives cannot write data to the depth buffer until the fragment shader has executed and fragment visibility is known. These deferred depth writes can impact performance, as subsequent primitives cannot be processed until the depth buffers are updated with the alpha tested primitive’s values.

On all mobile architectures, it is beneficial not to use partial colour masks, it is better to use either RGBA or 0. If you use partial colour masks the previous frame has to be read in. This is performed by a full-screen primitive reading it in as a texture. This texture must be masked out by the partial clear, which is done by submitting another full screen primitive as a blend.

While all mobile architectures are great at half-precision computation, PowerVR is exceptionally good at it, so make sure you use it wherever you can. Using half-precision (FP16) in shaders can result in a significant improvement in performance over high precision (FP32). This is due to the dedicated FP16 Sum of Products (SOP) arithmetic pipeline, which can perform two SOP operations in parallel per cycle, theoretically doubling the throughput of floating point operations. The FP16 SOP pipeline is available on most PowerVR Rogue graphics cores, depending on the exact variant.

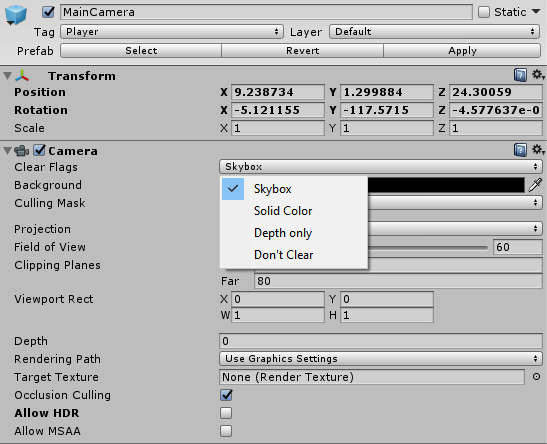

Clear flags

It is essential to make sure the screen is cleared for each pass so that the GPU doesn’t need to load the contents of the images from the previous frame buffer. Use ‘solid color’ or ‘skybox‘ clearing mode to make sure the GPU doesn’t load the texture’s old contents from memory.

On PowerVR, depth prepass is a very counter-productive thing to do as the GPU has to do redundant work by performing depth testing twice and saving the depth buffer to memory. You can make sure Unity doesn’t do this by setting the camera’s clear flags to something other than ‘depth only’ or ‘don’t clear’. We strongly recommend you do not use these two modes.

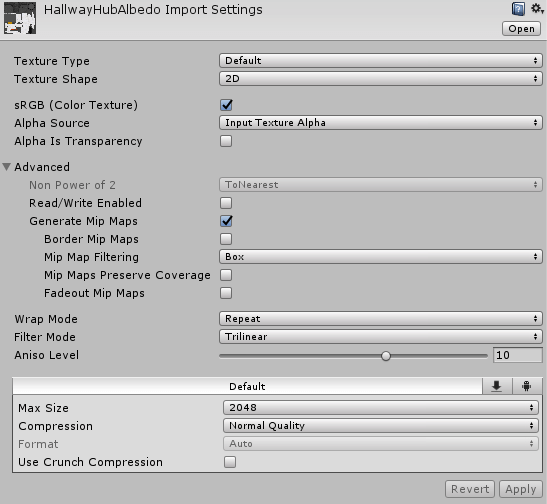

Mipmaps

Just as there’s a Level of Distance (LOD) solution for meshes (LOD groups), there’s a LOD solution for textures called mipmapping. Mipmapping works by automatically lowering the displayed texture resolution as the distance from the camera increases. This can significantly improve cache efficiency, increase performance and reduce bandwidth. See the screenshot below for how to set up mipmaps:

One thing to keep in mind when using mipmaps is that they should be only used for 3D elements in the scene. For 2D elements such as UI that are mapped 1:1 to the screen they are unnecessary, but if they are scaled you will still need mipmaps.

One thing to keep in mind when using mipmaps is that they should be only used for 3D elements in the scene. For 2D elements such as UI that are mapped 1:1 to the screen they are unnecessary, but if they are scaled you will still need mipmaps.

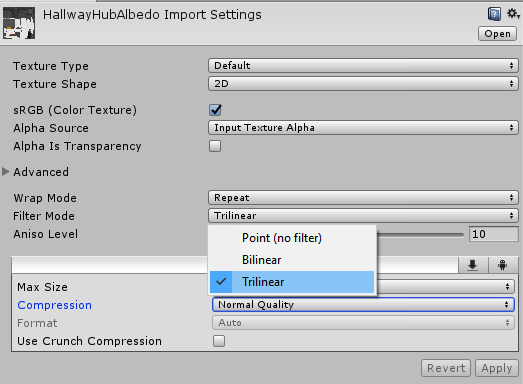

To ensure transitions between mipmap levels are seamless, make sure you are using trilinear filtering on your mipmapped assets as shown below:

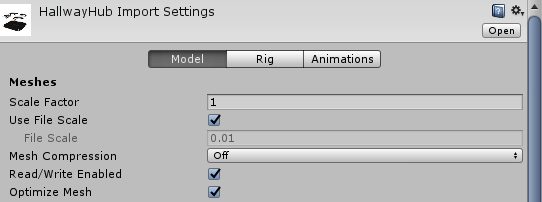

Mesh optimisation

Make sure you enable the optimise mesh option for your mesh assets. This option reorders vertices and indices so that they are friendlier to the caches in the GPUs as in the screenshot here:

Content optimisation for mobile

Mobile devices come with very particular constraints as they need to function on battery for at least a day and keep cool enough in the hand of the user. This means that when porting a desktop game to mobile, the content needs to be optimised.

First and foremost geometry complexity needs to be optimised. On desktop the usual geometry count today can be two to three million polygons visible on screen – on mobile this number is more like two to three hundred thousand polygons. After optimising the polygon count, the developer also needs to profile and verify that the vertex shaders are not overly complex.

Next, texture resolution and bandwidth usage, for example, post process effects, need to be adjusted to accommodate for mobile devices. On desktop, the GPU memory bandwidth is two to three hundred billion bytes/second; on mobile available memory bandwidth shared between the CPU and GPU is twenty to thirty billion bytes/second. While this means that texture resolution needs to be potentially halved, you also need to take into consideration that mobile screens are much smaller (20+ inches on desktop, five inches on mobile) so smaller textures are usually still sufficient. After the adjustments, profiling still needs to be done to verify the results.

Summary

As we can see, Unity provides a whole range of options to optimise content and games for mobile, and these can be put to good use on PowerVR. Even if there’s no immediate performance benefit, at the very least, it is always beneficial to reduce the GPU load to the minimum you need in order to save battery power.

If you want to know more, check out our documentation