- 17 March 2014

- Imagination Technologies

Fur effects have traditionally presented a significant challenge in real-time graphics. The latest desktop techniques employ Direct3D 11 tessellation to dynamically create hundreds of thousands of geometric hair follicles or fur strands on the fly. On mobile platforms, developers must make do with a far more restrained performance budget and significantly reduced memory bandwidth. To compound this, mobile devices are increasingly matching or surpassing the resolutions found on desktop systems.

Soft Kitty can be seen running on PowerVR Rogue GPUs

In spite of this, our new Soft Kitty OpenGL ES 3.0 demo shows that, with our latest PowerVR Series6 GPUs, it is possible to render animated fur covered characters in real time on mobile devices. The final demo runs at >30fps at resolutions higher than Full HD driven by a PowerVR Rogue GPU.

The cat alone features close to 200,000 triangles

It features a furry cat, playfully chasing a laser pointer through a room in a rustic European house.

Technical Features

The demo makes use of OpenGL ES 3.0 transform feedback and instancing to make a real-time shell-fur effect possible on mobile.

Both the cat and the environment are lit with a physically based shading model and cast soft shadows into their surroundings. Every shell of the cat is lit in real time; however, the environment uses pre-computed texture maps. The character animation is performed in a transform feedback pass, where the base cat mesh is skinned with 12 bones affecting each vertex. The output is then rendered with instancing and a shell texture, which creates the fur effect.

The use of transform feedback allows the application to calculate the skinned positions of the mesh once and then reuse the positions for every shell. Combining this with instancing means the output only has to be transferred to the GPU once where the shell offset is then calculated in the vertex shader. The high bone-per-vertex count has enabled a large amount of detail to be maintained in the real time model, but required the use of a Uniform Buffer Object (new in OpenGL ES 3.0) to pass all the data into the transform feedback shader.

Soft Kitty uses transform feedback and instancing, both features of OpenGL ES 3.0

In keeping with the pop culture reference in the name of the demo, the portrait behind the box is that of physicist Erwin Schrödinger, whose famous thought experiment was an illustration of the principle of superposition, in quantum theory.

A look at the wireframe view of the cat model

Development Challenges

Soft Kitty started out as a very modest experiment into developing a shell fur effect for mobile. The large amount of blending required is a challenge for many mobile graphics architectures. Despite this, we wanted to demonstrate that PowerVR Series6 GPUs can maintain high performance even when a large amount of alpha blending is required.

Initial proof of concept for the demo

After our initial proof of concept experiments illustrated above, we established that we could create a convincing static fur effect on a model. We began planning a scene around an animated cat. Integrating the fur and animated cat character proved to be a significant technical challenge.

Using a basic animated model, we began to develop some optimisations for rendering animated characters with fur. The use of transform feedback and instancing was introduced at this stage and continually refined and improved throughout development.

The final animated model we subsequently used was originally designed for offline rendering and as such has a high polygon count. The animation utilised a large number of bones per vertex to perform the skinning. We quickly established that using standard four bone per-vertex skinning would not be sufficient, as it created deformities around the tail and back. To combat this we separated the animation data from the model and created a custom 12 bone per-vertex skinning system.

Creating this system proved to be a challenge in several areas: from initially exporting the data from the modelling package into a custom storage format, loading it into the demo, reintegrating it with the mesh data, and then actually applying the skinning. In parallel to this we also began work on a scene and chose to experiment with using pre-computed light maps to create an attractive environment for the cat to move around in.

The above shows an early version of the final cat model, with basic lighting on a reflective floor which was removed when we had decided on a final scene.

|

|

An illustration of some of the issues faced in implementing the 12 bone-per-vertex system, specifically reintegrating the animation data with the original mesh. This part of development gained the nickname “the polygon soup phase”.

With the skinning system finalised, we were able to continue development on other areas of the demo, adding features such as the laser pointer, wireframe modes and the slow motion system. Work on the static scene progressed well, with the late addition of a skybox adding a nice touch to the end of the demo sequence.

View from the window in the final scene

One of the additional challenges came in lighting the cat. To integrate it with the pre-computed scene, we eventually settled on shading the fur with a BRDF (bidirectional reflectance distribution function). We also cast soft shadows from the cat which integrated with the shadows cast in the scene.

The final name for the demo was suggested by a colleague mid-development and ultimately stuck.

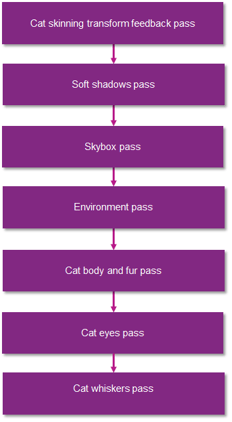

Rendering Sequence

The scene is built up over a number of passes. By taking advantage of transform feedback, the high polygon cat mesh only has to be skinned once and the subsequent positions can then be used for both the shadow pass and every shell of the body and fur pass. Whiskers were a late addition that we felt added to the overall level of detail of the scene.

Where to see Soft Kitty’s advanced OpenGL ES 3.0 features on display

The finalised demo has been several months in development and we are quite pleased with the results.

The demo was first shown at MWC 2014 where it received a very positive response. It will also be on display at the Imagination booth (#402) at GDC 2014, SIGGRAPH 2014 and all other major conventions.