- 17 May 2016

- Imagination Technologies

VR requires the support of many components in modern phones. This starts with the sensor for recording the motion of the head, the CPU driving the VR application (and everything else in the background), the GPU doing the work for the VR application and the calculations for creating the VR corrected image, to the display showing the transformed content to you, the observer.

All those components need to work closely together to create that immersive experience everybody is talking about. In a lot of publicly available content, the time to achieve this is called motion to photon latency. Although this is a very generic term, it describes the problem very well: when will the change in view before my eyes eventually be recognized by my eyes and processed by my brain because of a head motion?

In the real world, this happens instantly – otherwise you would run against walls all the time. However, creating this very same effect with a phone strapped to a head-mounted display turns out to be quite hard. There are different reasons for this: computers running at fixed clocks, the pipelining which serializes the processing of the data, and obviously the time it takes to render the final images.

We as a GPU IP company are of course interested in optimizing our graphics pipeline to reduce the time it takes for the PowerVR graphics processor to render the content until it appears on the display.

Composition requirements on Android

The final screen output on Android is rendered using composition. Usually there are several producers that create images on their own such as SystemUI which is responsible for the status bar and the navigation bar; and foreground applications that render their content into their own buffers.

Those buffers are taken by a consumer that arranges them and this arrangement is then eventually displayed on the screen. Most of the time this consumer is a system application called SurfaceFlinger.

SurfaceFlinger may decide to put those buffers (in the correct arrangement) directly on the screen if the display hardware supports that. This mode is called Hardware Composition and needs direct support by the display hardware. If the display doesn’t support a certain arrangement, SurfaceFlinger will use a framebuffer the size of the screen and render all images with the correct state into that framebuffer by using the GPU. That final composition render will then be shown by the display as usual.

Illustrating the process of composition

Both the producers and the consumer are highly independent – actually they are even different processes which talk to each other using inter-process communication. Now, if one is not careful, all those different entities will trample over each other all the time.

For example a producer may render into a buffer which is currently displayed to the user. If this happens the user of the phone would see this as a visual corruption called tearing. One part of the screen still shows the old content and the other part already shows the new content. Tearing is easily identifiable by seeing cut-lines.

To prevent tearing, two key elements are necessary. The first is proper synchronization between all parties and the second is double buffering.

Synchronization is achieved by using a framework called Android Native Syncs. Android Native Syncs have certain criteria which are important in the context of how they are being used on Android. They are system global syncs implemented in kernel space which are easily shareable between different processes by using file descriptors in usermode.

They are also non-reusable binary syncs that allow just the states of “not signaled” and “signaled” and have only one state transition of going from “not signaled” to “signaled” (and not the other way around.)

Double buffering allows the producer to render new content while the old content is still being used by the consumer. When the producer has finished rendering, the two buffers are switched and the consumer can present the new content while the producer starts rendering into the (now old) buffer again.

Both mechanisms are necessary for the fluid output of the user interface on Android, but unfortunately they also have a cost which is additional latency.

Having double buffering means the content rendered right now will be visible to the user one frame later. Also the synchronization prevents access to anything which is on screen right now. To remove this particular latency the idea of single buffering can be used. Basically it means render always to the buffer which is on screen. Obviously to make this happen the synchronization also needs to be turned off.

We implemented this feature in the KHR_mutable_render_buffer EGL extension ratified by the Khronos Group just this March.

This extension needs support from both the GPU driver and the Android operation system. As I explained earlier, Android goes a long way to prevent this mode of operation because it results in tearing.

So how can we prevent tearing when running in the new single buffer mode?

Screen technologies

To answer this question we need to look into how displays work. The GPU driver posts a buffer to the display driver which is in the format the display can understand. There may be different requirements for the buffer memory format, like special striding or tiling alignments.

The display normally shows this new buffer on the next vsync. Assuming the display scans out this memory from top to bottom the vsync or vertical sync is the point in time when the “beam” goes from the bottom back to the top of the screen.

Obviously nowadays there is no beam anymore, but this nomenclature is still in use. Because at this point in time the display does not scan out from any buffer, it is safe to switch to a new one without introducing any tearing. The display does this procedure in a fixed time interval defined by the screen period.

A common screen period is 16.7ms. This means the image on screen is updated 60 times per second.

The process of display scan out

The process of display scan out

Modern phones usually have an aspect ratio of 16/9 and their screen is mounted so that the scan out direction is optimal for portrait mode, because this is the mode most users will use their phone on a daily basis.

For VR this changes and the VR application will run in landscape resulting in a scan out direction from left to right.

This is important when we look now at how to update the buffer while it is still on the screen.

Strip rendering

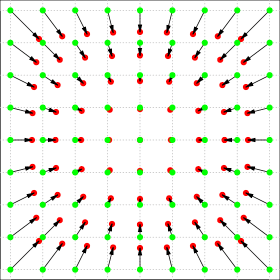

When the display is scanning out the buffer, it is doing so at a constant rate. The idea of strip rendering is to change that part of the buffer which is currently not scanned out. There are two different strategies available. We could try to change the part of the screen where the scan out happens next.

This is called beam racing, because we try to run in front of the beam and update the part of the memory which is just about to be shown next. The other strategy is called beam chasing and means updating the part behind the beam.

Beam racing is again better for latency, but at the same time harder to implement. The GPU needs to guarantee to have finished rendering in a very tight time window. This guarantee can be hard to fulfil in a multi-process operating system where things happen in the background and the GPU also may render into other buffers at the same time. So the easier method to implement is beam chasing.

Beam chasing strip rendering

Beam chasing strip rendering

The VR application asks the display for the last vsync time and adjusts itself to it to make sure the render has the full screen period time for the presentation. To calculate the time for a strip to start to render, we need to define the strip size first. The strip size and therefore the number of strips to use is implementation defined. It depends on how fast the display scans out and how fast we are able to render each strip. In most cases two strips are optimal. In VR this also has the benefit of having one strip per eye (remember we scan out from the left to the right) which makes the implementation much easier. In the following example we still use four strips to see how this works in general. As I mentioned earlier, we have 16.7ms to render the full image.

Consequently we have 4.17ms to render each strip. The VR application waits 4.17ms after the last vsync and then starts rendering the first strip. Now it waits again 4.17ms (which is 8.34ms after the last vsync) before rendering the second strip. This gets repeated until all four strips are rendered and the sequence can start over again. Obviously we have to take into account how long each render submission takes and adjust our timing accordingly. Using absolute times has been shown to be the most accurate method to use.

Final thoughts

Reducing latency in VR applications on mobile devices is one of the major challenges facing developers today. This is different from desktop VR solutions which benefit from higher processing power and usually don’t encounter any thermal budget limitations.

Make sure you also follow us on Twitter (@ImaginationTech) for more news and announcements.